RunPod

Train, fine-tune and deploy AI models with RunPod

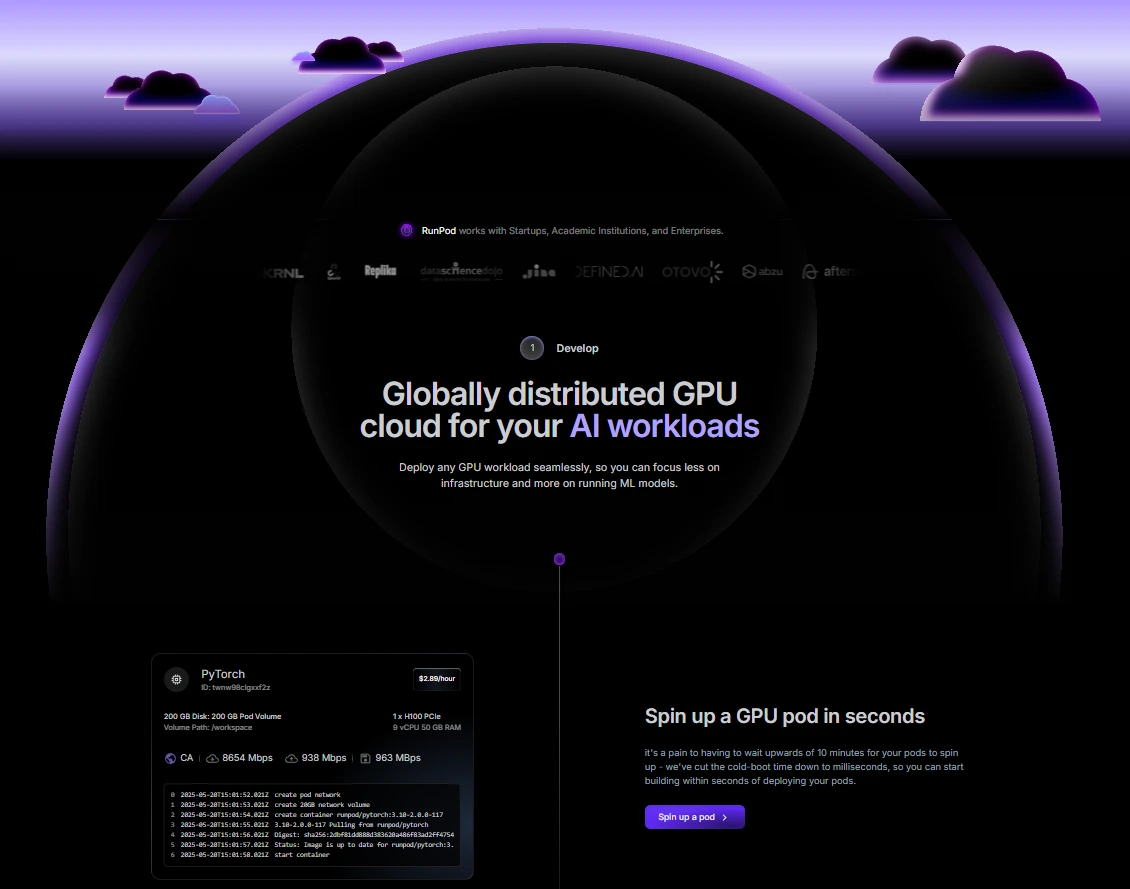

RunPod is a cloud computing platform tailored for AI, machine learning, and general compute workloads. It provides scalable, high-performance GPU and CPU resources, enabling users to develop, train, and deploy AI models efficiently.

With offerings like dedicated GPU Pods and Serverless endpoints, RunPod caters to a wide range of computational needs.

Pod Pricing (per hour):

-

- Community Cloud:

- RTX A4000 (16GB VRAM): $0.17/hr

- RTX 3090 (24GB VRAM): $0.22/hr

- A100 PCIe (80GB VRAM): $1.19/hr

- Secure Cloud:

- A40 (48GB VRAM): $0.40/hr

- L40 (48GB VRAM): $0.69/hr

- H100 PCIe (80GB VRAM): $1.99/hr

- MI300X (192GB VRAM): $2.49/hr

- Serverless Pricing (per second):

- A4000 (16GB VRAM): $0.00016/sec

- A100 (80GB VRAM): $0.00076/sec

- H100 PRO (80GB VRAM): $0.00116/sec

- H200 PRO (141GB VRAM): $0.00155/sec

- Storage:

- Pod Volume & Container Disk: $0.10/GB/month (running), $0.20/GB/month (idle)

- Network Volume: $0.07/GB/month (<1TB), $0.05/GB/month (>1TB)

- Community Cloud:

Tool Summary

| Value Rating | ★★★★ (4/5) |

| Price Tier | Paid |

| Cost | $ (1/5) |

| Category | Uncategorized |

Features

- Dedicated GPU Pods: Run containerized workloads on dedicated GPU or CPU instances.

- Serverless Endpoints: Deploy AI models with autoscaling, pay-per-second execution.

- Preconfigured Templates: Access to over 50 templates for rapid deployment of common environments.

- Command-Line Interface: Manage deployments programmatically using RunPod's CLI tool.

- Savings Plans: Option to commit to usage for discounted rates on certain resources.

Common Use Cases

- AI Model Training: Leverage powerful GPUs for training complex machine learning models.

- Inference Serving: Deploy models for real-time inference with autoscaling capabilities.

- Data Processing: Utilize high-performance compute resources for large-scale data analysis.

- Research & Development: Access affordable GPU resources for experimental and development purposes.

Pros ✅

- Cost-Effective GPU Access: Competitive pricing with options as low as $0.17/hr for certain GPUs.

- Flexible Deployment: Choice between dedicated Pods and Serverless endpoints to suit various workloads.

- Rapid Scaling: Serverless endpoints can scale from zero to hundreds of GPUs in seconds.

- Global Infrastructure: Presence in over 30 regions across North America, Europe, and South America.

- Zero Ingress/Egress Fees: No additional charges for data transfer.

Cons ❌

- Billing During Idle Time: Pods incur charges even when not actively utilized.

- Learning Curve: New users may require time to familiarize themselves with the platform's features and configurations.

- Limited Long-Term Storage Options: Persistent storage solutions may not be as robust as some competitors.